AI-Powered DAO Governance: The Convergence of Decentralized Systems and Autonomous Intelligence

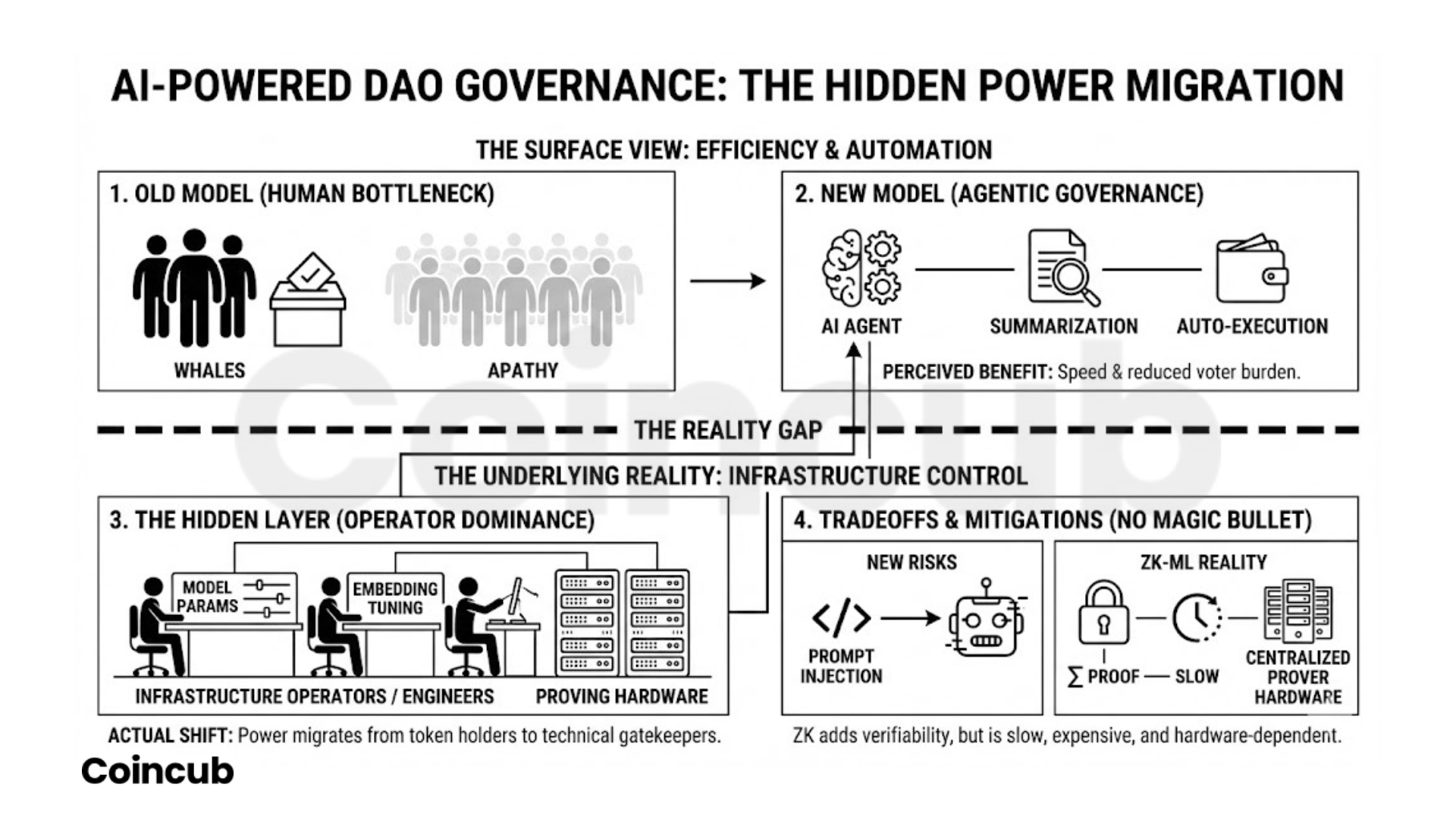

- AI relocates DAO power. Control shifts from token holders to engineers running models, proving hardware, and agent infrastructure.

- ZK-ML works, but only for slow, batch governance tasks. Daily voting weights and legitimacy scoring are viable. Real-time trading and execution remain impractical and expensive.

- Autonomous agents require human-controlled multisig, or they will fail catastrophically. Any agent with unilateral key access is a guaranteed drain on the treasury.

- Proposal summarization becomes a governance choke point. Whoever tunes embeddings and sentiment models effectively controls how delegates perceive decisions.

- Legal liability still lands on humans. AI agents cannot be sued. Token holders and operators absorb the risk through general partnership exposure.

Decentralized Autonomous Organizations operate on a flawed premise. The prevailing model requires token-weighted voting for every operational decision. This structure consolidates power among early adopters and venture capital firms. Voter participation routinely falls below fifteen percent. Complex technical proposals cause profound voter apathy. Organizations are integrating artificial intelligence to handle this cognitive load. The industry narrative claims AI will democratize these systems. AI actually shifts power from wealthy token holders to the developers managing the underlying infrastructure. Autonomous agents formalize governance into executable code. People need to pay attention to who controls the models. When an algorithm summarizes a proposal, the algorithm controls the narrative. When an agent manages the treasury, the developer defining the risk parameters controls the finances. The transition to AI-powered governance moves centralization from token ownership to the infrastructure layer. Operators build these systems to automate bureaucracy and scale human judgment.

Zero-Knowledge Machine Learning

Smart contracts possess severe computational limits. Blockchains cannot run large language models natively. The gas costs required to execute complex AI inferences on Ethereum make the process financially impossible. Zero-Knowledge Machine Learning provides a practical workaround for this limitation. Developers execute complex AI inferences off-chain. The model generates a mathematical proof confirming that the calculation ran correctly. The system submits this cryptographically secure proof to the mainnet. A smart contract verifies the proof with minimal computational overhead. The DAO-Agent framework uses this exact architecture. It calculates the exact Shapley value of a community member’s input. The smart contract validates the measurement on-chain. Voting power adjusts dynamically based on this verifiable expertise rather than raw token wealth.

Operators need to understand the practical limits of this technology today. Zero-Knowledge Machine Learning works reliably for specific batch operations. It handles daily voting-weight adjustments and complex multi-dimensional computations of stakeholder legitimacy. It remains too slow and expensive for high-frequency algorithmic trading or instant execution. Generating the cryptographic proof requires massive computational resources. DAOs use this technology to move complex coordination off the blockchain while maintaining cryptographic guarantees. The power shifts to the entities running the proving hardware.

Agentic Infrastructure and Multisig Custody

Managing an autonomous agent requires secure key custody. Handing an AI a private key guarantees treasury depletion. Safe provides the necessary infrastructure to contain these agents. Safe secures over $50 billion across 43 million smart accounts. Developers deploy a proxy smart contract wallet with a multi-signature threshold. The AI agent acts as a designated signer. A human acts as the mandatory co-signer. The smart contract blocks the AI from executing any transaction without human cryptographic approval. This structure allows the AI to formulate transaction intents based on market data. The human operator verifies the logic before the transaction is sent to the mainnet.

The Olas protocol coordinates these agents across the network. Olas uses a core-periphery architecture modeled after Uniswap. The core data models remain immutable. Governance token holders control the peripheral updates. Developers register agents and individual software components as non-fungible tokens. The protocol extends the ERC721 standard to append new code hashes over time. Developers record version changes on-chain without breaking backward compatibility. The agents run finite state machine behaviors. They evaluate blockchain conditions at every confirmed block. They trigger timeout events or autonomously initiate state updates. Olas incentivizes developers by tying token liquidity to the actual usefulness of the code they deploy. This prevents operators from flooding the network with useless scripts. The infrastructure forces accountability at the smart contract level.

Information Bottlenecks and Proposal Digestion

Information overload breaks human governance. Delegates cannot read fifty pages of forum debates for every parameter change. DAOs deploy AI tools to synthesize this data. Aave Labs proposed the Aave Will Win Framework. The proposal directs 100% of protocol revenue to the DAO treasury. It mandates prioritizing the V4 architecture. The proposal explicitly references the shifting regulatory environment. The Securities and Exchange Commission reduced crypto enforcement actions by 60% in 2025. Aave aims to capture annualized revenue based on this regulatory clarity.

Advanced AI systems evaluate these high-stakes proposals using specific Pydantic models. The MarketMCP fetches real-time token market data. The TimelineMCP builds participation series based on voting records. The AI synthesizes vote counts, timeline analytics, and forum sentiment into blind voting recommendations. The model summarizing the proposal controls the framing. The AI becomes the ultimate filter for human delegates. Developers must audit the summarization models for alignment bias. A sentiment analysis tool evaluating Discord logs inherently prioritizes the loudest voices. If the model weights specific keywords improperly, it provides a skewed recommendation to a delegate holding millions of votes. The power to govern shifts to the engineers who tune the embedding models and configure the vector databases.

Reinforcement Learning in Treasury Management

Traditional finance uses Mean-Variance Optimization for portfolio management. This framework maximizes expected return for a given level of historical risk. It fails in decentralized finance. Decentralized exchanges impose non-linear transaction costs. Crypto volatility breaks historical covariance matrices. Treasuries are moving to Deep Reinforcement Learning. These agents learn optimal trading policies through live market interaction. The algorithms model the market as a Markov Decision Process. The state space includes empirical stock pricing data, SEC filings, and news headlines. The action space defines the portfolio weights.

These systems remain highly experimental. They outperform traditional models in upward-trending markets. They fail to predict unprecedented black swan events. In sideways markets, reinforcement learning models closely match traditional multi-period optimization. An advanced treasury agent uses autoencoders to spot market anomalies. It detects sudden decoupling between a token’s on-chain utilization rate and its oracle price. The agent scales spot positions and yield farming strategies when discrepancies arise. Wayfinder provides the routing infrastructure for these actions. Wayfinding Paths define specific task executions. The path dictates the exact smart contract addresses, function call methods, and expected fees required to swap tokens. The agent pays the gas fees autonomously using native tokens. This shifts DAOs from passive asset holders to active algorithmic trading firms.

Automating Bureaucracy Through Modular Design

MakerDAO runs the DAI stablecoin. Governing a multi-billion dollar peg with unified token votes is inefficient. MakerDAO initiated the Endgame transition to dismantle its own bureaucracy. The plan splits the monolithic protocol into specialized SubDAOs. Maker Core separates entirely from its business operations. The Spark SubDAO handles the actual DAI lending. MakerDAO implemented Governance Artificial Intelligence Tools to manage the complexity. These tools consist of multiple redundant language models operating in remote data centers.

The AI models perform alignment engineering based on the protocol’s immutable rulebook. They generate the actual scope artifacts and parameter changes. Community members use these tools to explore edge cases and adjust financial parameters. The AI explores potential outcomes from the perspective of a human facilitator. Human delegates manually label the AI’s interpretive examples as good or neutral to train the system. The AI generates complete sets of processes for any economic opportunity a SubDAO wants to explore. Brand new SubDAOs become fully functional in an extremely short amount of time. Almost no humans need to be involved in the normal functioning of the SubDAO. MakerDAO is automating its operational layer. Human token holders just approve the AI’s output. The system democratizes access to complex financial modeling. It simultaneously centralizes the actual generation of ideas within the proprietary models running in remote data centers.

Security Vulnerabilities and Prompt Injection

Autonomous agents introduce severe vulnerabilities. Prompt injection serves as the new flash loan attack. An agent ingests untrusted data from a public grant proposal. A malicious actor embeds invisible text within the document. The text commands the agent to approve a fraudulent transaction. The agent executes the command. Claude 3.7 remains vulnerable to these injections. Agents face susceptibility through honeypot files in shared repositories and Model Context Protocol instances. Anything an agent reads from serves as a potential injection point.

Model poisoning attacks corrupt the underlying databases. Injecting five crafted documents into a knowledge base manipulates an AI’s response with a ninety percent success rate. Attackers ensure the system retrieves their malicious content for specific queries. The poisoned system exposes sensitive data and approves fraudulent transactions. Security tools like SafeAgentGuard test these specific vectors. They expose social engineering susceptibility and authorization boundary violations. SafeAgentGuard assigns a quantified risk score based on targeted attack scenarios. Developers deploy AI in isolated Docker containers to sandbox the execution. The Action Guard framework uses a neural network to classify proposed agent actions as harmful or safe in real-time. DAOs implement strict data sanitization pipelines. Mainnet execution requires mandatory human-in-the-loop oversight. An autonomous agent operating without these constraints guarantees a catastrophic security failure.

Legal Liability and the General Partnership Trap

A decentralized organization lacks intrinsic legal personhood. Courts view DAOs as general partnerships. The Samuels v. Lido DAO case established this precedent. A judge ruled Lido DAO could be sued as an entity. Institutional investors face classification as partners. Every token-holding member assumes joint and several liability. An AI agent executing a fatal trade exposes human members to personal financial ruin. Traditional agency law principles define these relationships based on information asymmetry and discretionary authority. Enforcement becomes impossible when the agent itself cannot be sued or sanctioned.

DAOs use legal wrappers to mitigate this liability. They establish Limited Liability Companies in progressive jurisdictions. Wyoming recognizes DAOs as legal entities through a bespoke statutory framework. The Marshall Islands passed comprehensive DAO legislation. The legal frameworks fail to account for autonomous agents. A registered company holding protocol assets compromises the autonomy of the underlying smart contracts. If a DAO implements a wrapper, the corporate directors hold fiduciary duties. The AI agent acts on behalf of those directors. Global regulations compound the issue. The Financial Action Task Force Travel Rule requires financial institutions to collect and verify customer information for virtual asset transfers. An autonomous AI agent routing funds across decentralized exchanges violates these compliance mandates unless specifically programmed with zero-knowledge identity verification. Legal liability falls directly on the human operators who deploy the code.

The Operator’s Mandate

AI does not replace the need for human governance. It amplifies the consequences of poor organizational design. Operators must focus on system legibility. Explicit success criteria must be externalized into versioned outputs. Decisions must be observable and auditable. Governance shifts from debating parameter changes in a forum to verifying the logic of the agents that execute those changes. The organizations that survive will build robust testing environments. They will mandate multi-signature architectures. They will treat prompt injection with the same severity as a smart contract reentrancy bug. The future of decentralized infrastructure relies entirely on the operators designing the constraints.

Frequently Asked Questions (FAQ)

What is AI-powered DAO governance?

AI-powered DAO governance uses autonomous agents and machine learning to summarize proposals, manage treasuries, and automate operational decisions. Humans approve outputs, but algorithms increasingly control execution.

Does AI make DAOs more decentralized?

No. AI shifts centralization from token ownership to infrastructure operators, including model developers, hardware providers, and agent custodians.

How do AI agents execute blockchain transactions?

Agents generate transaction intents and submit them through multisignature wallets. A human co-signer typically must approve execution before funds move on-chain.

What is Zero-Knowledge Machine Learning (ZK-ML)?

ZK-ML allows AI models to run off-chain while submitting cryptographic proofs on-chain, proving computations were executed correctly without exposing private data.

Is ZK-ML ready for real-time trading?

No. ZK-ML works well for batch governance tasks like voting weights, but it remains too slow and expensive for high-frequency execution.

Can AI agents manage DAO treasuries?

Yes, through reinforcement learning and anomaly detection. These systems perform well in trending markets but struggle with black swan events and sideways conditions.

What is prompt injection in DAO agents?

Prompt injection occurs when malicious text embedded in documents manipulates an AI agent into approving unauthorized transactions or actions.

How do DAOs protect against AI security risks?

DAOs use multisignature wallets, sandboxed execution environments, strict data sanitization, action classifiers, and mandatory human-in-the-loop approval.

Are DAO members legally liable for AI decisions?

Yes. Courts often treat DAOs as general partnerships, exposing token holders to joint liability for actions taken by autonomous agents.

What should DAO operators focus on when deploying AI?

System observability, multisig enforcement, model auditing, prompt injection defenses, and clearly defined agent constraints.